Menu

Get prebuilt spark

Jul 10, 2015 Spark + pyspark setup guide. This is guide for installing and configuring an instance of Apache Spark and its python API pyspark on a single machine running ubuntu 15.04.- Kristian Holsheimer, July 2015. If not already installed, install git using. Sudo apt-get install git. Py4j installation. This package is essential for running pyspark. Sudo pip install py4j Now that we have all the prerequisites for Apache Spark installed, We move on to the installation of Apache Spark.

Test prebuilt spark (this should open a spark console, use Ctrl+C to exit )

Get virtualenv: We assume your python is installed under your home dir, so no sudo is needed.

If you want to install python under your home dir, get the tarball from here and use

./configure --prefix=any/dir/of/your/choice/where/you/have/write/access . Then, you need to make install and add python's bin to the $PATH environment variable.To install

virtualenv

Start new virtualenv

Get necessary scientific python packages

edit bashrc or spark-2.1.0-bin-hadoop2.7/conf/spark-env.sh

paste the following in spark-2.1.0-bin-hadoop2.7/conf/spark-env.sh (this file doesn't originally exist, you have to create it)

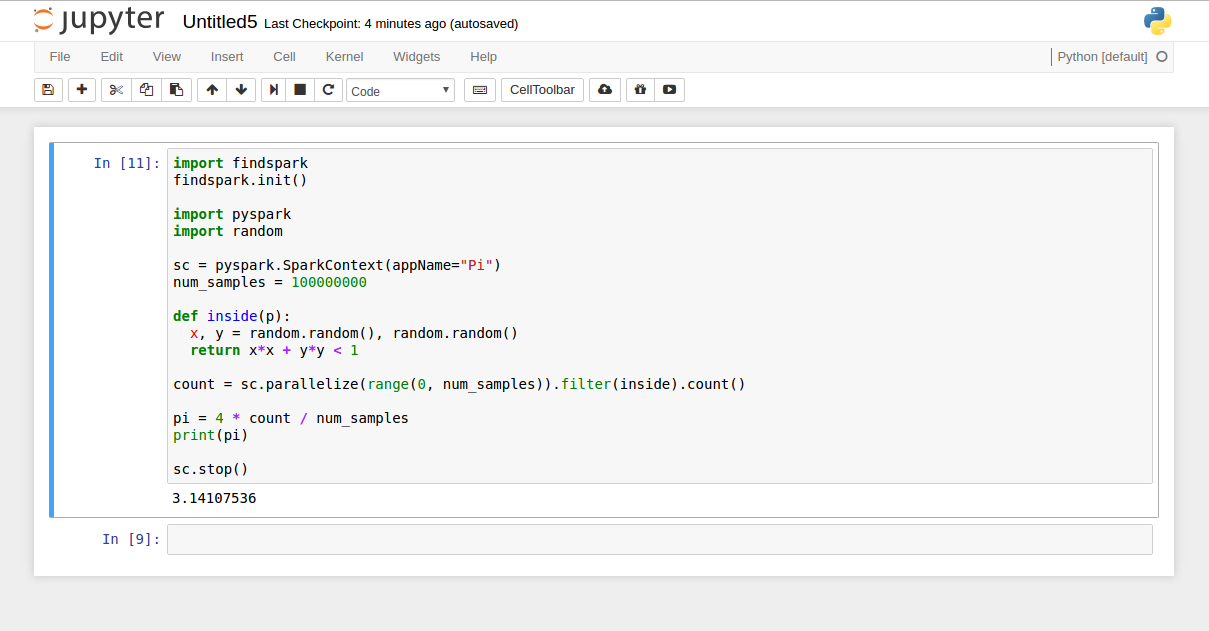

Start a jupyter notebook with pyspark (edit the number of slave processes [4] appropriately)

If you executed all of the above on remote machine from a local linux box via ssh:

You can open a ssh tunnel as follows. This way, you can open the jupyter notebook in your local browser instead of having to use the browser on the remote machine via

ssh -X. In case of the following tunnel, you need to open your local browser at http://localhost:8889 and enter the token printed in your terminal in the previous step.(Above gist has been successfully tested with Ubuntu 14.04 LTS on Intel Xeon E5-2620 and Intel Celeron N3160)